Discover the science powering Forbrain®

Our pioneering technology improves voice perception for enhanced auditory processing, all thanks to bone conduction and a dynamic filter.

A research-backed, revolutionary device

Forbrain is a learning device scientifically proven to improve cognitive processes and outputs such as voice quality, sound discrimination, stuttering, general cognitive functioning, and reading ability. Created by the owners of the Tomatis® Method, Forbrain uses the voice to train the brain to process sensory information more effectively.

From the moment you speak, the device modulates the sound of your voice using the dynamic filter. This revolutionary technology analyzes and enhances the voice, amplifying frequencies and rhythm. The headphones immediately transmit the sounds back to you through bone conduction via the temporal bones, which retrains the brain’s auditory feedback loop.

Learn about the science behind Forbrain®

Scientific Studies on Forbrain

Brainlab – Cognitive Neuroscience Research Group

The potential effect of Forbrain as an altered auditory feedback device

Read more

North China University of Science and Technology

Speech-auditory feedback training on cognitive dysfunctions in stroke patients

Read more

Brainlab – Cognitive Neuroscience Research Group

The potential use of Forbrain in stuttering: A single-case study

Read more

Universidad Internacional De La Rioja

Study of Speech Fluency, Memory and Attention with Forbrain

Read more

University of Barcelona

A scientific single case study on speech, auditory processing and attentional strengthening with Forbrain

Read moreThe Auditory Process

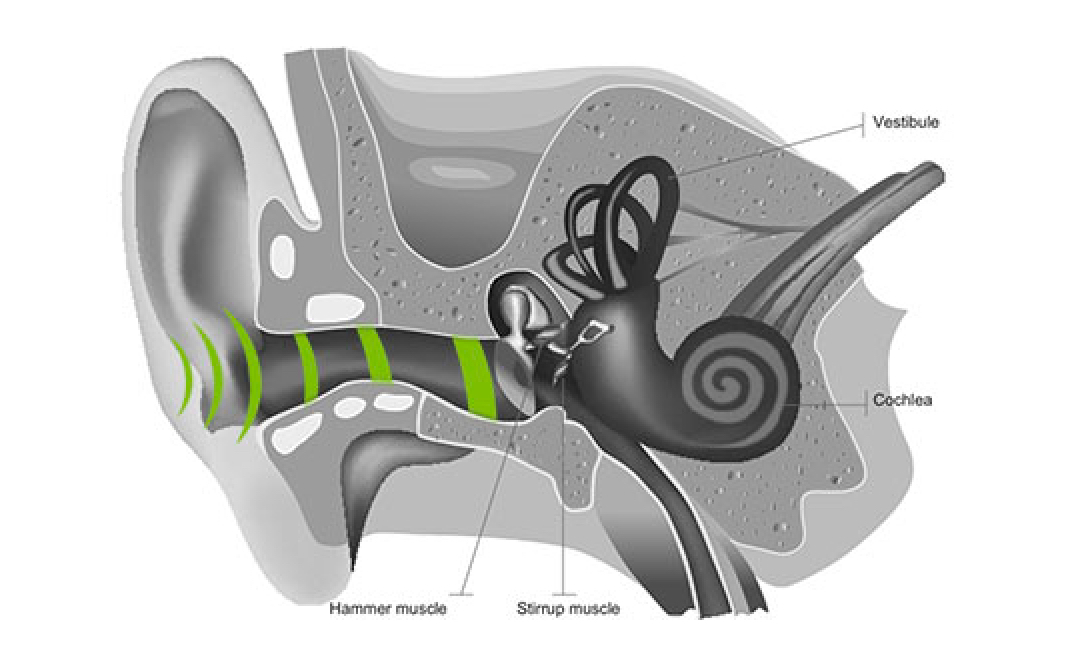

Air conduction

Air conduction

The experience of perceiving sound starts when sound waves are captured by your outer ears, the two formations on either side of your head. Sound waves travel in the air by the middle ear, a process part of air conduction, through to the ossicles. This structure is made of three tiny bones that transmit sound vibrations to the inner ear.

Here, the cochlea vestibule nerve converts sound into electrical impulses and sends them along the auditory nerve to the brain, where you use this information to navigate the world.

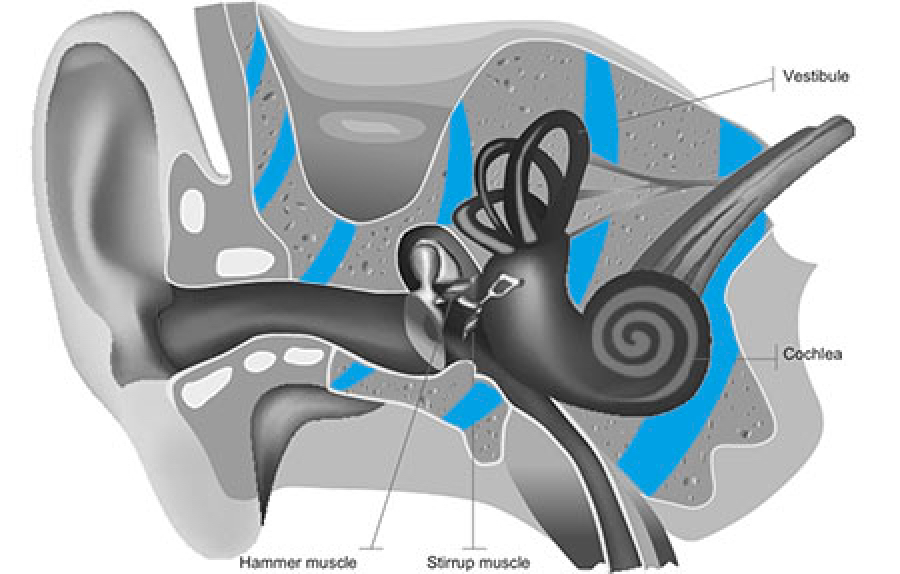

Bone conduction

Bone conduction

Now, the air conduction canal isn’t the only way we process sound. Many people are surprised to discover we mostly perceive our voice through the bones in our heads.

To experience this yourself, block your ears and speak out loud. You’re still able to hear your voice because cranial bone vibration sends sound directly to the inner ear. Its job is to transmit information along the auditory nerve as signals to the brain. This sound transmission bypasses the external and middle ear and is 10x faster than air conduction.

Sound traveling through air conduction can be disturbed due to background noise or people might have issues with their air conduction. For these reasons, Forbrain favors bone conduction as it’s the most relevant way to hear yourself.

The auditory feedback loop

The auditory feedback loop

Have you ever noticed how you adjust the volume of your voice when in a noisy environment such as a busy street? If you have, then you’ve experienced the auditory feedback loop. This is the natural process in which we perceive, analyze, assimilate, and continually adjust our speech.

For the loop to function properly, we effortlessly activate our abilities in auditory discrimination, phonological awareness, and the integration of rhythm. These skills are an innate part of human functioning and are necessary for all learning processes.

Everyone can improve their auditory feedback loop, no matter whether you want to develop general learning skills or experience loop interruptions due to cognitive or emotional issues.

Forbrain optimizes this auditory process and retrains the brain to significantly improve:

- Speech & communication

- Attention & focus

- Memory

- Learning

- And many other cognitive processes

Everyone can improve their auditory feedback loop, no matter whether you want to develop general learning skills or experience loop interruptions due to cognitive or emotional issues.

Forbrain optimizes this auditory process and retrains the brain to significantly improve:

- Speech & communication

- Attention & focus

- Memory

- Learning

- And many other cognitive processes

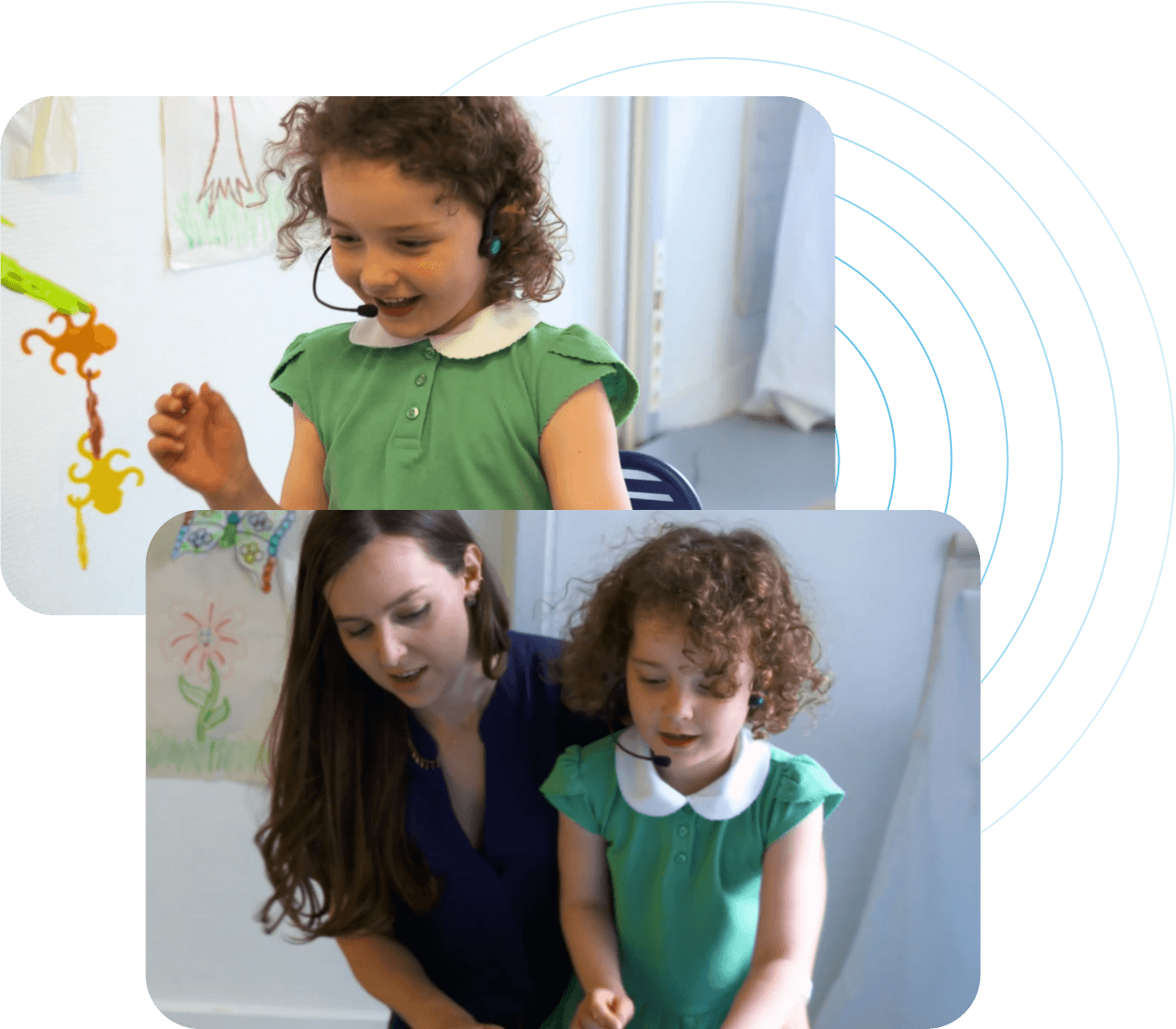

Who uses Forbrain®?

Forbrain is suitable for people of any age or ability level who want to develop their communication, memorization and attention, and other related skills. Learn how Forbrain can help you grow further!

Schoolchildren

Boost your child’s abilities to learn and interact at school for enhanced academic performance from Pre-K through to high school.

Learn more

University Students

Perform at a higher level and alleviate the pressure of intense learning during study, job interviews, or extracurricular activities.

Learn more

Professionals

Watch your career take off with newfound confidence. Forbrain is suitable for anyone who needs to use their voice to make an impact.

Learn more

Seniors

Enhance your cognitive abilities for healthier aging where you enjoy improved mental wellbeing and quality of life.

Learn more

Special Education Needs

Get better therapy results and support the growth of your child’s cognitive development for a stronger start in life.

Learn more

Specialists & Therapists

Boost therapy outcomes for children and adults who struggle with improving their speech, attention, or learning abilities.

Learn more

Our unique technology

High-sensitivity Microphone

Speak into the high-sensitivity microphone and it captures your voice’s sound waves, sending the information to the dynamic filter for processing.

Patented Dynamic Filter

To deliver your corrected voice, the patented filter continually amplifies high-frequency sounds and softens low frequencies while blocking environmental noise.

Bone Conduction Transducers

Sound is transmitted via the temporal bones. This bone conduction delivers auditory information to the brain 10x faster than air conduction (through the ear canals).

See Forbrain© up close

Patented Dynamic FilterProcesses and produces a corrected voice

Bone Conduction TransducersEnhances sound transmission via the temporal bones

User MicrophoneCapture the sound waves of your voice

Headphone JackUsed to listen to audio recordings of training or online classes

Additional MicrophoneFor training support by the parent, teacher, or therapist

Patented Dynamic FilterProcesses and produces a corrected voice

Bone Conduction TransducersEnhances sound transmission via the temporal bones

User MicrophoneCapture the sound waves of your voice

Headphone JackUsed to listen to audio recordings of training or online classes

Additional MicrophoneFor training support by the parent, teacher, or therapist

Scientific results on Forbrain

Research and scientific evaluation on Forbrain are the backbone of everything we do. Read the latest scientific literature here.

All blog posts